The checked posts have now been moved into the ai/ column and use AI-prefixed URLs as their canonical addresses. The rest remain in their original sections, but are also reachable through AI-prefixed paths.

2026

- The Nature of Intelligence: The Free Energy Principle

- OpenClaw Broke npm Again: What Happens When You Ship Without Testing

- Why 'Vibe Coding' Should Be Translated as 'Xieyi Programming'

- 360 Shipped Its Wildcard TLS Private Key Inside a Public Installer

- AI Survival Guide: Where the Biggest Arbitrage Really Is

- AI Says: I Have Intelligence, But Not a Life

- OpenClaw Hype: Foam on Top of the Productivity Revolution

- Shockwaves at Alibaba Qwen: The Soul of the Team Walks Away

- Claude's Global Outage: Missiles or a Success Tax?

- How Much Can One Person Get Done with AI over Spring Festival?

- When Coding Becomes Cheap, What Still Matters?

- The Agent Moat: Runtime

- New Programmers in the AI Era: Where Do You Go?

- Pigsty v4.0: Into the AI Era

- Don't run AI assistant on cloud

- Agent OS: We're Building DOS Again

- Claude Code Observability

- Claude Code Quick Start: Using Alternative LLMs at 1/10 the Cost

2025

- When Answers Become Abundant, Questions Become the New Currency

- Why PostgreSQL Will Dominate the AI Era

- Google AI Toolbox: Production-Ready Database MCP is Here?

- Where Will Databases and DBAs Go in the AI Era?

- Stop Arguing, The AI Era Database Has Been Settled

- Scaling Postgres to the next level at OpenAI

- In the AI Era, Software Starts at the Database

2024

2023

2017

2016

2014

2012

The Nature of Intelligence: The Free Energy Principle

·2944 words·14 mins

The free energy principle tries to explain life, perception, learning, action, and intelligence within one mathematical framework. It also offers a deeper lens for understanding LLMs, agents, and the next generation of AI systems.

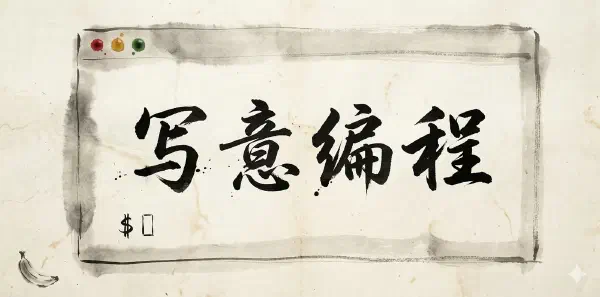

Why 'Vibe Coding' Should Be Translated as 'Xieyi Programming'

·756 words·4 mins

The best Chinese translation of “Vibe Coding” is not a literal one. “Xieyi Programming” captures the shift from line-by-line control to intent-first coding, where AI handles the details.

AI Says: I Have Intelligence, But Not a Life

·709 words·4 mins

A Socratic dialogue between a human and an AI about consciousness, memory, embodiment, and the difference between being smart and actually living through time.

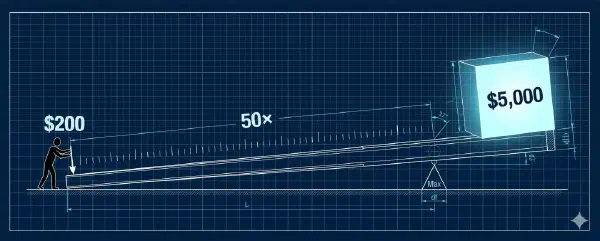

AI Survival Guide: Where the Biggest Arbitrage Really Is

The biggest AI arbitrage available to ordinary users is not some obscure token play. It is the heavily subsidized max-tier subscription plans from frontier model vendors, provided you can convert that quota into real output.

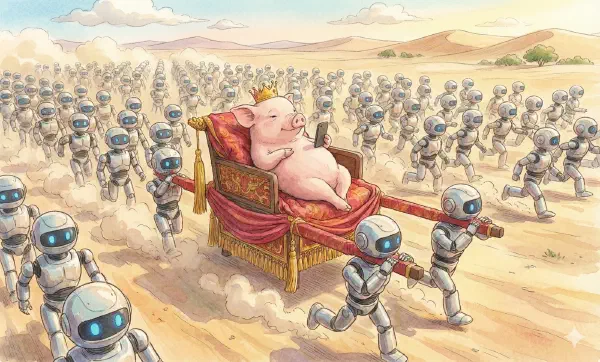

OpenClaw Hype: Foam on Top of the Productivity Revolution

OpenClaw looks exciting because it turns agents into a chat-style experience. But the real productivity gains come from high-capability subscription agents and disciplined workflows, not from lobster-flavored wrappers.

How Much Can One Person Get Done with AI over Spring Festival?

·509 words·3 mins

Over roughly ten days during Spring Festival, I used Claude Code, Codex, and a pile of workflows to translate books, ship releases, package software, refresh websites, and keep publishing daily. This is what solo output looks like when agent leverage really lands.

When Coding Becomes Cheap, What Still Matters?

·1144 words·6 mins

Codex 5.3 xHigh pushed my workflow past a tipping point: writing code is no longer the scarce resource. The real leverage is design quality and engineering acceptance. This is the practical loop I use to ship reliable software with AI agents.

The Agent Moat: Runtime

·1834 words·9 mins

A mediocre local who knows the terrain beats a genius parachuted into unknown territory. Intelligence without context is idle. An agent without a runtime is vapor.

New Programmers in the AI Era: Where Do You Go?

Should we still hire fresh grads? Squeezed between AI and senior devs, what’s the play for new programmers? Master the right tools, take initiative, find the right mentor.

Agent OS: We're Building DOS Again

·3053 words·15 mins

LLM = CPU. Context = RAM. Database = Disk. Agent = App. The mapping is surprisingly clean. And if OS history is any guide, we may know what comes next — and what’s still missing.

Claude Code Observability

·655 words·4 mins

Yesterday I tweeted: “Built a Claude Code Grafana dashboard to see how it makes decisions, uses tools, and burns through API credits.” Didn’t expect so much interest.

So let’s talk about Claude Code observability.

Claude Code Quick Start: Using Alternative LLMs at 1/10 the Cost

How to install and use Claude Code? How to achieve similar results at 1/10 of Claude’s cost with alternative models? A one-liner to get CC up and running!

Google AI Toolbox: Production-Ready Database MCP is Here?

·1224 words·6 mins

Google recently launched a database MCP toolbox, perhaps the first production-ready solution.

AI Cult Rhapsody

·306 words·2 mins

A tongue-in-cheek vision of an AI-worshipping religion: scriptures, sects, philosopher-king machines, and the Book of AGI.

Will AI Have Self-Awareness?

·815 words·4 mins

Large models can feel “aware,” but self-awareness is another matter. We explore the term from Buddhism, cognitive psychology, and neural nets, then riff on a possible AI religion after bingeing Pantheon.

Basic Principles of Neural Networks

·4499 words·22 mins

Neural networks are inspired by how the brain works and can be used to solve general learning problems. This article introduces the basic principles and practice of neural networks.

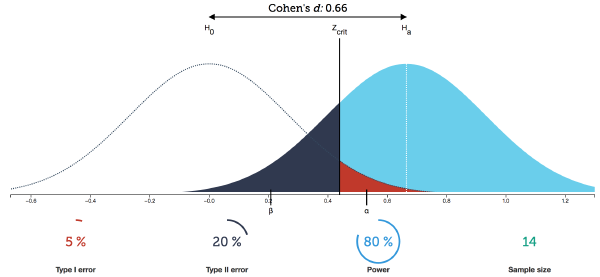

Inferential Statistics: The Past and Present of p-values

·1659 words·8 mins

The core of inferential statistics lies in hypothesis testing. The basic logic is based on an important argument from philosophy of science: universal propositions can only be falsified, not proven. The reasoning is simple: individual cases cannot prove a universal proposition, but they can refute it.

Statistics Fundamentals: Descriptive Statistics

·5845 words·28 mins

Statistical analysis is divided into two fields: descriptive statistics and inferential statistics. Descriptive Statistics is the technology for describing or characterizing existing data and is the most fundamental part of statistics.

Basic Concepts of Probability Theory

·1941 words·10 mins

Basic knowledge notes on probability theory: axiomatic foundations, probability calculus, counting, conditional probability, random variables and distribution functions

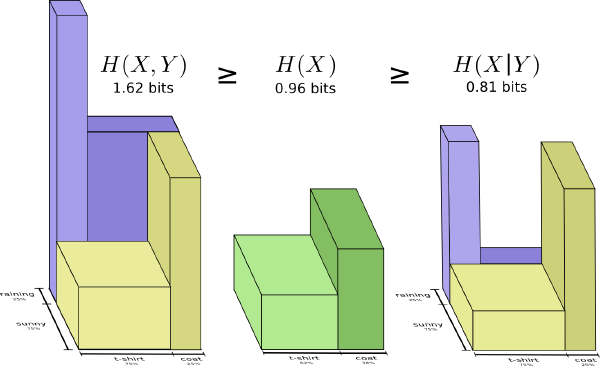

Fundamentals of Information Theory: Entropy

·1371 words·7 mins

Reading notes on ‘Elements of Information Theory’: What is entropy? Entropy is a measure of the uncertainty of random variables, and also a measure of the information needed to describe random variables on average.

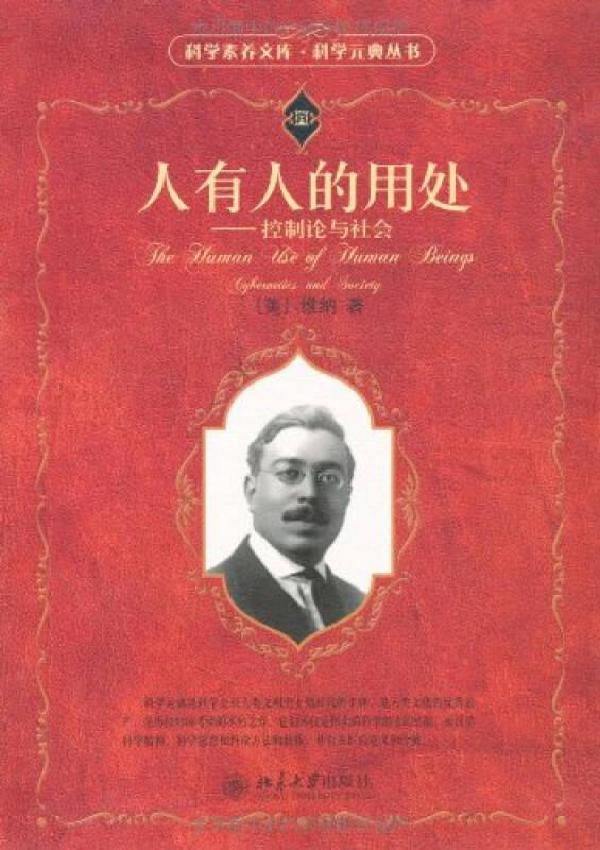

Humans, Society, and Neural Networks

·1070 words·6 mins

Neural networks emerged inspired by the human brain, so the operational mechanisms of human society also have various similarities and connections with neural network training. Some thoughts on reading Wiener’s “The Human Use of Human Beings - Cybernetics and Society.”

Fundamental Concepts of Linear Algebra

·2631 words·13 mins

Connecting all concepts in linear algebra through one main thread